Zero-Encapsulation Kubernetes Networking: Calico BGP with ToR Switch Integration

In the world of high-performance Kubernetes deployments, network overhead can become a significant bottleneck. Traditional overlay networks like VXLAN or IPIP add encapsulation layers that consume CPU cycles, introduce latency, and reduce overall throughput. For organizations running latency-sensitive applications or high-bandwidth workloads, every microsecond and every bit of throughput matters. This is where zero-encapsulation networking with Calico BGP and Top-of-Rack (ToR) switch integration becomes a game-changer.

Calico’s BGP mode eliminates overlay network overhead entirely by leveraging the Border Gateway Protocol to announce pod and node IP addresses directly to your network infrastructure. Instead of wrapping packets in additional headers, Calico programs your ToR switches to understand the native IP addresses of your pods, creating a truly flat network topology. This approach can deliver 30-50% better network performance compared to overlay solutions, with significantly lower CPU utilization on your nodes.

The benefits extend beyond raw performance. Zero-encapsulation networking simplifies troubleshooting, reduces the complexity of network debugging, and provides better visibility into traffic flows. However, it requires careful planning and deep understanding of both Kubernetes networking and BGP routing protocols. This article will guide you through implementing a production-ready Calico BGP setup with ToR switch integration, covering everything from initial configuration to performance optimization and troubleshooting.

Prerequisites

Before diving into the implementation, ensure you have:

- Advanced Kubernetes knowledge: Understanding of CNI plugins, network policies, and cluster networking concepts

- BGP routing experience: Familiarity with BGP concepts, AS numbers, route advertisements, and peering relationships

- Network infrastructure access: Administrative access to ToR switches with BGP capability (Cisco, Juniper, Arista, etc.)

- IP address planning: A well-designed IP allocation strategy for nodes, pods, and services

- Calico experience: Basic understanding of Calico components and configuration

- Production environment: This guide assumes a production or production-like environment with proper network segmentation

Hardware requirements:

- ToR switches supporting BGP (most enterprise switches from major vendors)

- Kubernetes nodes with sufficient network interfaces

- Network infrastructure supporting ECMP (Equal-Cost Multi-Path) for optimal load balancing

Understanding Zero-Encapsulation Architecture

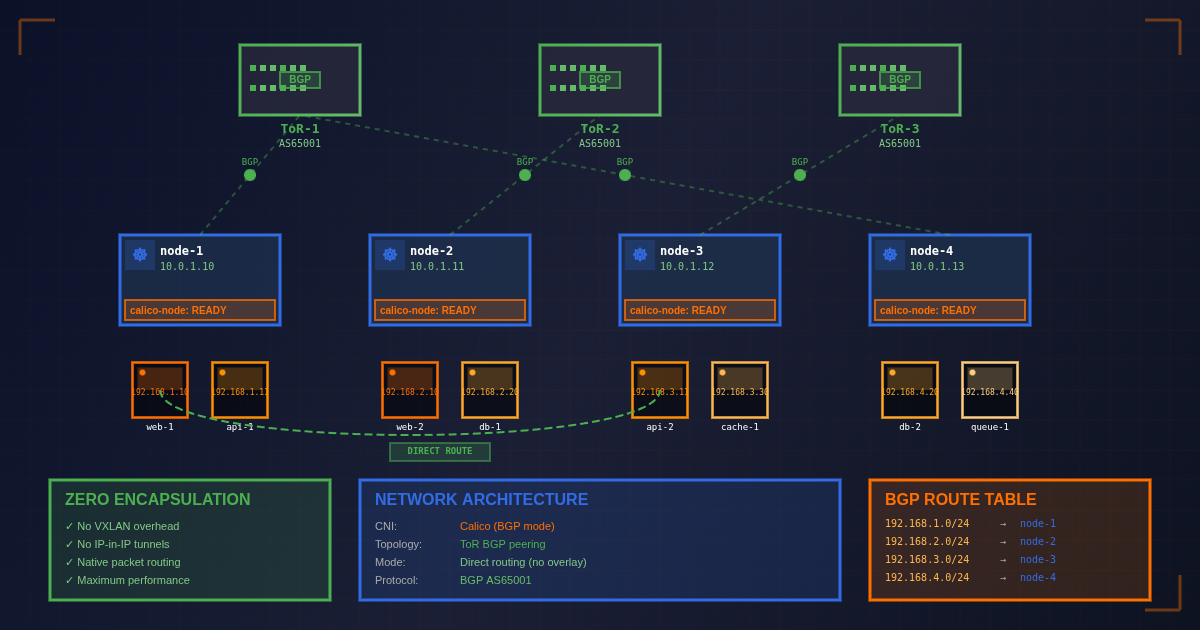

Network Topology Overview

In a zero-encapsulation setup, your Kubernetes cluster becomes an integral part of your data center’s routing fabric. Each node acts as a BGP speaker, advertising its pod CIDRs directly to ToR switches. The switches then propagate these routes throughout your network infrastructure, enabling direct pod-to-pod communication without tunneling.

The architecture consists of several key components:

- Calico BGP speakers on each node that peer with ToR switches

- ToR switches configured as BGP route reflectors or full mesh peers

- Pod IP pools allocated from your data center’s IP space

- Route advertisements for node-specific pod subnets

BGP Peering Models

Calico supports multiple BGP peering models for ToR integration:

- Full mesh peering: Every node peers with every ToR switch (suitable for small clusters)

- Route reflector model: ToR switches act as route reflectors, reducing peering complexity

- Spine-leaf with route reflectors: Hierarchical design for large-scale deployments

Calico BGP Configuration

Basic BGP Pool Configuration

The foundation of zero-encapsulation networking starts with properly configured IP pools.

apiVersion: projectcalico.org/v3

kind: IPPool

metadata:

name: bgp-pool-datacenter

spec:

cidr: 10.244.0.0/16

ipipMode: Never

vxlanMode: Never

natOutgoing: true

nodeSelector: "all()"

blockSize: 26

---

apiVersion: projectcalico.org/v3

kind: BGPConfiguration

metadata:

name: default

spec:

logSeverityScreen: Info

nodeToNodeMeshEnabled: false

asNumber: 64512

serviceClusterIPs:

- cidr: 10.96.0.0/16

ToR Switch BGP Peering Configuration

Establishing BGP peering relationships between Calico nodes and ToR switches requires careful configuration on both sides.

apiVersion: v1

kind: Secret

metadata:

name: tor-switch-01-bgp-secret

type: Opaque

stringData:

password: secure-bgp-password

---

apiVersion: v1

kind: Secret

metadata:

name: tor-switch-02-bgp-secret

type: Opaque

stringData:

password: secure-bgp-password

---

apiVersion: projectcalico.org/v3

kind: BGPPeer

metadata:

name: peer-tor-switch-01

spec:

peerIP: 192.168.1.1

asNumber: 65001

password:

secretKeyRef:

name: tor-switch-01-bgp-secret

key: password

keepOriginalNextHop: true

nodeSelector: "all()"

---

apiVersion: projectcalico.org/v3

kind: BGPPeer

metadata:

name: peer-tor-switch-02

spec:

peerIP: 192.168.1.2

asNumber: 65001

password:

secretKeyRef:

name: tor-switch-02-bgp-secret

key: password

keepOriginalNextHop: true

nodeSelector: "all()"

---

apiVersion: projectcalico.org/v3

kind: BGPPeer

metadata:

name: peer-node-01-tor-switch-01

spec:

peerIP: 192.168.1.1

asNumber: 65001

nodeSelector: "kubernetes.io/hostname == 'node-01'"

bfdEnabled: true

---

apiVersion: projectcalico.org/v3

kind: BGPPeer

metadata:

name: peer-node-02-tor-switch-02

spec:

peerIP: 192.168.1.2

asNumber: 65001

nodeSelector: "kubernetes.io/hostname == 'node-02'"

bfdEnabled: true

---

apiVersion: projectcalico.org/v3

kind: BGPPeer

metadata:

name: peer-node-03-tor-switch-01

spec:

peerIP: 192.168.1.1

asNumber: 65001

nodeSelector: "kubernetes.io/hostname == 'node-03'"

bfdEnabled: true

---

apiVersion: projectcalico.org/v3

kind: BGPPeer

metadata:

name: peer-node-04-tor-switch-02

spec:

peerIP: 192.168.1.2

asNumber: 65001

nodeSelector: "kubernetes.io/hostname == 'node-04'"

bfdEnabled: true

---

apiVersion: projectcalico.org/v3

kind: BGPPeer

metadata:

name: peer-node-05-tor-switch-01

spec:

peerIP: 192.168.1.1

asNumber: 65001

nodeSelector: "kubernetes.io/hostname == 'node-05'"

bfdEnabled: true

---

apiVersion: projectcalico.org/v3

kind: BGPPeer

metadata:

name: peer-node-06-tor-switch-02

spec:

peerIP: 192.168.1.2

asNumber: 65001

nodeSelector: "kubernetes.io/hostname == 'node-06'"

bfdEnabled: true

Advanced Route Advertisement Control

Fine-grained control over route advertisements is crucial for production deployments. This Go program demonstrates how to implement selective route advertisement based on workload types:

apiVersion: projectcalico.org/v3

kind: IPPool

metadata:

name: pool-high-priority

labels:

workload-class: high-priority

priority: "100"

spec:

cidr: 10.244.0.0/18

ipipMode: Never

vxlanMode: Never

natOutgoing: true

blockSize: 26

nodeSelector: "workload-class == 'high-priority'"

---

apiVersion: projectcalico.org/v3

kind: IPPool

metadata:

name: pool-standard

labels:

workload-class: standard

priority: "200"

spec:

cidr: 10.244.64.0/18

ipipMode: Never

vxlanMode: Never

natOutgoing: true

blockSize: 26

nodeSelector: "workload-class == 'standard'"

---

apiVersion: projectcalico.org/v3

kind: IPPool

metadata:

name: pool-batch-processing

labels:

workload-class: batch-processing

priority: "300"

spec:

cidr: 10.244.128.0/18

ipipMode: Never

vxlanMode: Never

natOutgoing: true

blockSize: 26

nodeSelector: "workload-class == 'batch-processing'"

---

apiVersion: projectcalico.org/v3

kind: BGPFilter

metadata:

name: filter-high-priority

spec:

exportV4:

- cidr: 10.244.0.0/18

matchOperator: Equal

action: Accept

setCommunities:

- 64512:100

setLocalPreference: 100

---

apiVersion: projectcalico.org/v3

kind: BGPFilter

metadata:

name: filter-standard

spec:

exportV4:

- cidr: 10.244.64.0/18

matchOperator: Equal

action: Accept

setCommunities:

- 64512:200

setLocalPreference: 200

---

apiVersion: projectcalico.org/v3

kind: BGPFilter

metadata:

name: filter-batch-processing

spec:

exportV4:

- cidr: 10.244.128.0/18

matchOperator: Equal

action: Accept

setCommunities:

- 64512:300

setLocalPreference: 300

---

apiVersion: projectcalico.org/v3

kind: BGPFilter

metadata:

name: global-export-filter

spec:

exportV4:

- cidr: 10.244.0.0/18

matchOperator: Equal

action: Accept

setCommunities:

- 64512:100

- cidr: 10.244.64.0/18

matchOperator: Equal

action: Accept

setCommunities:

- 64512:200

- cidr: 10.244.128.0/18

matchOperator: Equal

action: Accept

setCommunities:

- 64512:300

- cidr: 0.0.0.0/0

matchOperator: Equal

action: Reject

ToR Switch Configuration Examples

Cisco Nexus Configuration

For Cisco Nexus switches, the BGP configuration involves enabling the BGP feature and configuring neighbors for each Kubernetes node:

# Enable BGP feature

feature bgp

# Configure BGP router with private AS

router bgp 65001

router-id 192.168.1.1

address-family ipv4 unicast

maximum-paths 16 # Enable ECMP for load balancing

# Configure BGP neighbors for Kubernetes nodes

neighbor 10.0.1.10

remote-as 64512

password secure-bgp-password

address-family ipv4 unicast

route-map CALICO-IN in

route-map CALICO-OUT out

maximum-prefix 1000

# Route maps for traffic engineering

route-map CALICO-IN permit 10

match community HIGH-PRIORITY

set local-preference 150

route-map CALICO-OUT permit 10

match ip address prefix-list CALICO-PODS

set community 65001:100

# Prefix list for Calico pod networks

ip prefix-list CALICO-PODS permit 10.244.0.0/16 le 26

Juniper Configuration

For Juniper switches, the configuration follows a similar pattern with different syntax:

# Configure BGP group for Calico nodes

set protocols bgp group calico-nodes type external

set protocols bgp group calico-nodes peer-as 64512

set protocols bgp group calico-nodes local-as 65001

set protocols bgp group calico-nodes multipath

# Add Kubernetes nodes as neighbors

set protocols bgp group calico-nodes neighbor 10.0.1.10

set protocols bgp group calico-nodes neighbor 10.0.1.11

set protocols bgp group calico-nodes neighbor 10.0.1.12

# Configure import/export policies

set policy-options policy-statement calico-import from protocol bgp

set policy-options policy-statement calico-import from route-filter 10.244.0.0/16 orlonger

set policy-options policy-statement calico-import then accept

Network Performance Optimization

ECMP Configuration

Equal-Cost Multi-Path (ECMP) routing is essential for maximizing bandwidth utilization in zero-encapsulation deployments. Configure your ToR switches to support multiple equal-cost paths:

💡 Tip: Modern switches support 16-32 ECMP paths. Ensure your BGP configuration advertises routes with equal metrics to enable proper load balancing.

BGP Timers Tuning

Optimize BGP timers for faster convergence while avoiding unnecessary churn:

# Recommended BGP timer settings for production

router bgp 65001

timers bgp 30 90 # keepalive=30s, holdtime=90s

neighbor 10.0.1.10

timers 10 30 # Faster timers for critical links

CPU and Memory Optimization

Monitor BGP process resource utilization on both switches and nodes:

- Switch CPU: BGP route processing should consume <5% CPU under normal conditions

- Memory usage: Route table size typically 1-10MB depending on cluster size

- Calico node resources: BGP speaker process typically uses 50-100MB RAM per node

Best Practices

1. IP Address Management Strategy

Implement a hierarchical IP addressing scheme that aligns with your data center topology. Allocate IP ranges based on racks, availability zones, or failure domains to simplify routing and troubleshooting.

2. BGP AS Number Planning

Use private AS numbers (64512-65535) for Kubernetes clusters and ensure they don’t conflict with existing network infrastructure. Consider using different AS numbers for different clusters or environments.

3. Route Filtering and Security

Implement strict route filters to prevent route leaks and ensure only legitimate pod CIDRs are advertised. Use BGP communities for traffic engineering and policy enforcement.

4. Monitoring and Observability

Deploy comprehensive monitoring for BGP sessions, route advertisements, and network performance metrics. Use tools like Prometheus with BGP exporters to track session state and route counts.

5. Graceful Maintenance Procedures

Develop procedures for maintaining ToR switches without disrupting cluster connectivity. Use BGP graceful restart and route dampening to minimize service impact.

6. Multi-Homing and Redundancy

Configure multiple BGP peering sessions per node when possible. Connect nodes to multiple ToR switches for redundancy and load distribution.

7. Documentation and Change Management

Maintain detailed documentation of BGP configurations, IP allocations, and network topology. Implement change management processes for network modifications.

Common Pitfalls and Solutions

1. BGP Session Flapping

Problem: BGP sessions repeatedly establish and tear down, causing route instability.

Solution: Tune BGP timers appropriately, implement BFD for faster failure detection, and ensure network connectivity is stable before enabling BGP.

2. Route Advertisement Loops

Problem: Incorrect route filtering causes routing loops or suboptimal paths.

Solution: Implement proper route maps and prefix lists. Use BGP communities to tag routes and prevent readvertisement of learned routes.

3. IP Pool Exhaustion

Problem: Running out of available IP addresses in pod CIDR blocks.

Solution: Plan IP allocation carefully with room for growth. Monitor IP pool utilization and implement automated alerting for low availability.

4. Switch Resource Exhaustion

Problem: ToR switches run out of routing table space or BGP neighbor capacity.

Solution: Understand switch limitations and plan accordingly. Use route summarization where possible and monitor resource utilization.

5. Inconsistent Network Policies

Problem: Network policies don’t work as expected in BGP mode due to routing bypassing normal packet processing.

Solution: Ensure Calico network policies are properly configured for BGP mode. Test policy enforcement thoroughly in non-production environments.

Real-World Use Cases

High-Frequency Trading Platform

A financial services company implemented zero-encapsulation networking for their high-frequency trading platform, achieving:

- 40% reduction in network latency (from 150μs to 90μs average)

- 60% improvement in throughput for market data processing

- Simplified troubleshooting with direct IP visibility in network monitoring tools

Machine Learning Training Cluster

A research organization deployed Calico BGP for their distributed ML training workloads:

- Eliminated network bottlenecks in parameter server communication

- Reduced CPU overhead by 25% on compute nodes

- Improved job completion times by 30% for large-scale training jobs

Multi-Tenant SaaS Platform

A SaaS provider used BGP with traffic engineering to implement tenant isolation:

- Implemented QoS policies using BGP communities

- Achieved network-level tenant separation without performance overhead

- Simplified compliance reporting with direct traffic flow visibility

Performance Considerations

Throughput Improvements

Zero-encapsulation networking typically delivers:

- 30-50% better throughput compared to VXLAN/IPIP overlays

- Lower CPU utilization (5-15% reduction in network processing overhead)

- Reduced memory bandwidth consumption for packet processing

Latency Optimization

Key factors affecting latency in BGP deployments:

- Switch forwarding latency: Typically 1-5μs for modern switches

- BGP convergence time: 10-30 seconds for route updates

- ECMP load balancing: Can introduce microsecond-level jitter

Scalability Limits

Consider these scaling factors:

- BGP sessions per switch: Most enterprise switches support 100-500 BGP neighbors

- Routes per node: Typically 100-1000 routes per Kubernetes node

- Convergence time: Increases with network size and complexity

Testing Approach

Unit Testing BGP Configuration

Test individual BGP configurations before deploying to production:

# Validate BGP session establishment

calicoctl node status

birdc show protocols

# Test route advertisement

ip route show table all

birdc show route export

Integration Testing

Verify end-to-end connectivity and performance:

# Test pod-to-pod communication across nodes

kubectl run test-pod --image=busybox --rm -it -- ping <target-pod-ip>

# Measure network performance

iperf3 -c <target-pod-ip> -t 60 -P 4

# Validate route propagation

traceroute <pod-ip>

Load Testing

Simulate production workloads to validate network performance:

- Connection rate testing: Establish thousands of concurrent connections

- Bandwidth testing: Saturate network links to verify ECMP behavior

- Failover testing: Simulate node and switch failures to test convergence

Monitoring and Alerting

Implement comprehensive monitoring for:

- BGP session state and route counts

- Network throughput and latency metrics

- Switch resource utilization

- Route convergence times

Conclusion

Zero-encapsulation networking with Calico BGP and ToR switch integration represents the pinnacle of Kubernetes network performance optimization. By eliminating overlay network overhead and leveraging your existing data center infrastructure, you can achieve significant improvements in throughput, latency, and resource utilization.

Key takeaways from this implementation:

- Performance gains are substantial - expect 30-50% improvement in network throughput with reduced CPU overhead

- Planning is critical - successful deployments require careful IP addressing, BGP configuration, and network design

- Operational complexity increases - you’ll need deep networking expertise and robust monitoring to maintain the system

- Integration with existing infrastructure - leverage your data center’s routing capabilities rather than working around them

- Testing is essential - thoroughly validate configurations in non-production environments before deployment

The investment in implementing zero-encapsulation networking pays dividends for performance-critical applications, but requires commitment to operational excellence and deep networking expertise.